Where MSS is the maximum segment size and P loss is the probability of packet loss. The limitation caused by window size can be calculated as follows:

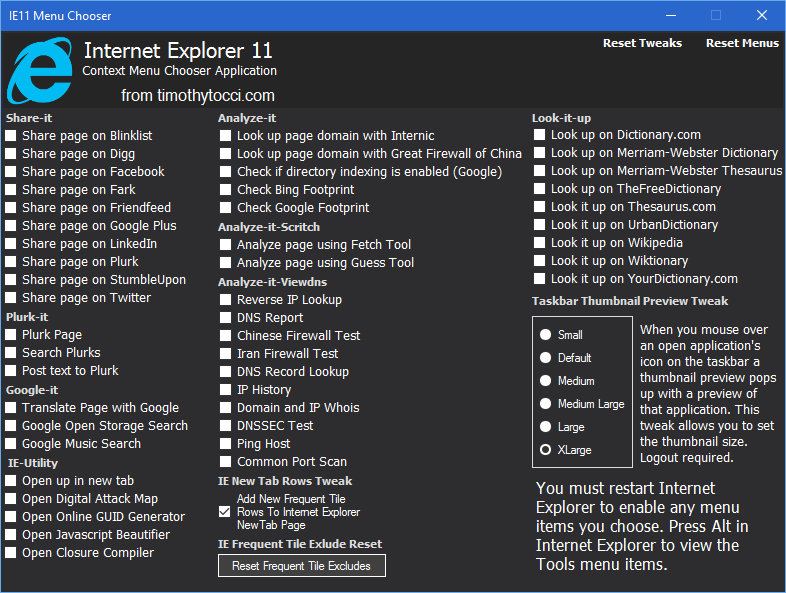

Internet tweak tool full#

Because TCP transmits data up to the window size before waiting for the acknowledgements, the full bandwidth of the network may not always get used. This is how TCP achieves reliable data transmission.Įven if there is no packet loss in the network, windowing can limit throughput. If the sender has not received acknowledgement for the first packet it sent, it will stop and wait and if this wait exceeds a certain limit, it may even retransmit. In computer networking, RWIN (TCP Receive Window) is the amount of data that a computer can accept without acknowledging the sender. See also: TCP window scale option and Congestion window Bit errors can create a limitation for the connection as well as RTT. But there are also other, less obvious limits for TCP throughput. One trivial limitation is the maximum bandwidth of the slowest link in the path. Maximum achievable throughput for a single TCP connection is determined by different factors. For very high performance applications that are not sensitive to network delays, it is possible to interpose large end to end buffering delays by putting in intermediate data storage points in an end to end system, and then to use automated and scheduled non-real-time data transfers to get the data to their final endpoints. In general, buffer size will need to be scaled proportionally to the amount of data "in flight" at any time. Larger buffers are required by the high performance options described below.īuffering is used throughout high performance network systems to handle delays in the system. The original TCP configurations supported TCP receive window size buffers of up to 65,535 (64 KiB - 1) bytes, which was adequate for slow links or links with small RTTs. Operating systems and protocols designed as recently as a few years ago when networks were slower were tuned for BDPs of orders of magnitude smaller, with implications for limited achievable performance. Despite having much lower latencies than satellite links, even terrestrial fiber links can have very high BDPs because their link capacity is so large. To give a practical example, two nodes communicating over a geostationary satellite link with a round-trip delay time (or round-trip time, RTT) of 0.5 seconds and a bandwidth of 10 Gbit/s can have up to 0.5×10 10 bits, i.e., 5 Gbit = 625 MB of unacknowledged data in flight.

High performance networks have very large BDPs. it is equal to the maximum number of simultaneous bits in transit between the transmitter and the receiver. Network and system characteristics Bandwidth-delay product (BDP) īandwidth-delay product (BDP) is a term primarily used in conjunction with TCP to refer to the number of bytes necessary to fill a TCP "path", i.e.